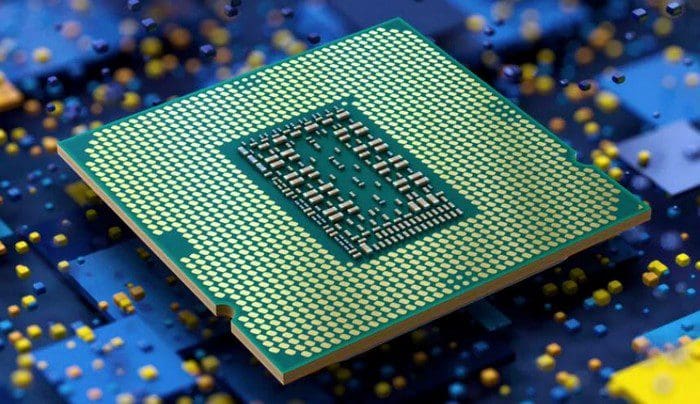

A modern computer is a highly complex machine, performing a vast amount of processing exceptionally quickly. Few parts are more complex than a CPU. A modern CPU actively reorders the instructions it receives, has multiple processors, deep pipelines, multiple pipelines per core, a high success rate of predicting which branching path to execute speculatively, and the ability to use more registers than allowed by simply renaming them. All this makes CPUs extremely complex. Gone are the sequential days where an instruction is processed to completion before the next one is started.

However, one thing that hasn’t had massive changes is what happens to each instruction. While the details vary as each generation of processor adjusts the number of pipeline stages to achieve peak performance, the basics of what happens to remain pretty constant. A machine cycle, also called the instruction cycle, is the cycle each instruction goes through to be processed.

The Basic Stages of the Pipeline

While each overarching section of the pipeline may be further broken down depending on the specifics of the CPU in use, the concept is the same. First, an instruction has to be fetched from memory. The instruction then needs to be decoded. The decoded instruction needs to be executed. Finally, if necessary, the result of the execution needs to be written back.

Fetching requires the CPU to use the PC or Program Counter to determine which instruction to fetch. When the computer first boots up, a specific instruction location is used by default. One of the parts of the fetch process is to update the program counter to point to the start of the next instruction in memory. This means the program counterpoints to the right place when the next instruction is processed. The fetched instruction is stored in an instruction register.

Note: Some exceptions can happen. For example, a Jump instruction adjusts the program counter to a different memory address rather than simply the next instruction. If the jump is small, it may be possible to execute this jump with no issue. Large jumps, however, may cause a pipeline stall as the target of the jump will need to be correctly calculated. This is especially an issue when the jump is conditional. In this case, the jump may or may not be taken. Unfortunately, that can only be determined after the execution stage.

Decode and Execute

The decode stage decodes the instruction to work out what needs to be done in the execution stage. One of the essential functions of this stage is to ensure that any data required for execution is directly available. Modern CPUs are incredibly fast. As fast as system RAM is, it just can’t supply data fast enough for the CPU. To alleviate this issue, the CPU has its cache memory.

While this cache isn’t large, typically less than 100MB total, it is much faster and has exceptionally high hit ratios. The cache still isn’t fast enough for the CPU, so the data that must be operated on must be moved into a register before the instruction is ready to execute. Registers are directly accessible by the CPU with zero latency. Even the fastest cache tier, L1, has a few clock cycles of latency.

Once the instruction has been decoded and the data, known as operands, has been transferred into the registers, the instruction can be executed. This involves the instruction and data routed to the logic processor needed to handle the operation. This part is the only part of each pipeline performing the logical operation you might think of as processing. Typically the execute stage takes a single clock cycle to complete per instruction. All other stages in the pipeline are necessary overhead to enable this stage. Modern pipelines are around 25 stages in length.

Writeback

Writeback is relatively simple to understand. If the result of the operation needs to be written to memory, this happens here. This is the last stage in a pipeline, at which point the machine cycle is complete for that instruction. Using a pipeline means that as an instruction clears one pipeline stage, the next instruction can move into that stage. This allows a considerable throughput increase compared to unpipelined operations. It also means that many machine cycles are in progress at once.

Conclusion

A machine cycle, also known as an instruction cycle, is the cycle each machine instruction takes as it passes through the instruction pipeline of a pipelined CPU. Many instruction cycles can happen at once, with each at a different stage in the pipeline. While the exact details vary based on the CPU’s pipeline architecture, the basic concepts are: Fetch, Decode, Execute, and Write Back. Execute is the stage you would think of as the processing happening. All other stages, however, are necessary overhead to achieve that.